Storage Spaces Direct can make use of cache disks if you have provided SSDs or NVMe SSDs in your nodes. Normally the capacity disks are bound to cache disks round-robin, see the official Microsoft doc here.

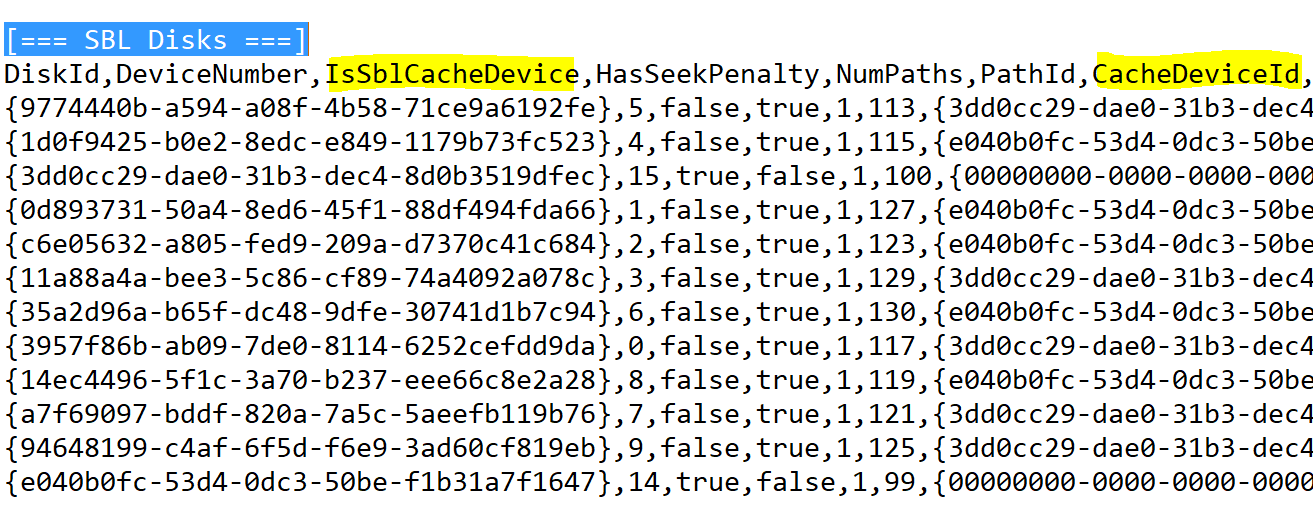

If you suspect or see that one node is not getting the right performance numbers you might wonder if your cache devices are used properly. The right way to check this is to run a Get-Clusterlog on your node which provide a log of all cluster components, including cache devices. In the file, scroll down to the ‘[=== SBL Disks ===]’ section which looks like this:

You will find a CSV formatted list with the disks in your node. Pay attention to the highlighted columns, these are the ones you’re looking for. With this information you can see which disk is connected to which cache disk. If the disks doesn’t have a ‘CacheDeviceId’ it is either a cachedisk itself or there is no cache device bound (booyah!).

Very cool but you want me to do this for every node… MANUALLY?!”

Nah, I wrote you a PowerShell script 😉

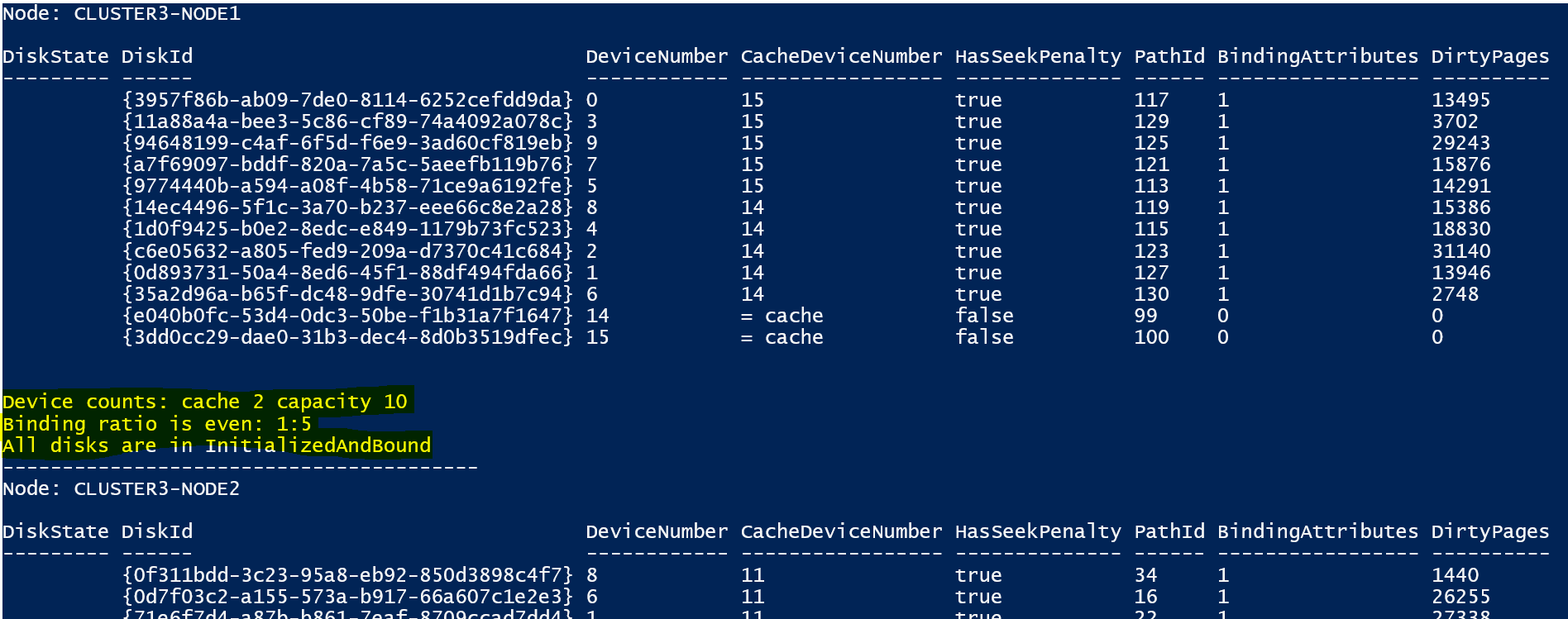

The script will setup a remote session with each clusternode and generate the clusterlog. It then reads through the log to find the disks section and report back:

DOWNLOAD: Get-CacheDiskStatus

Many credits of this script go out to this project.

I extracted the part for cache devices and modified the script so it runs remotely.

—

Thank you for reading my blog.

If you have any questions or feedback, leave a comment or drop me an email.

Darryl van der Peijl

http://www.twitter.com/DarrylvdPeijl

Good Article. +10

What happens if you have an incorrect ratio? Example. 4 servers. Each with 3 NVMe, 6 SSD, and 10 HDD. Using 3 way mirror on the performance tier and parity on the capacity tier.

Would this have any impact on resiliency?

Hi Joe,

It has never impact on resiliency. You would have 4 SSD’s that have 2 HDD’s bound to it. In other words, those 4 SSDs would get more IO and wear out faster.

Hi

So what do I do when I get an uneven ratio ? Is there any way to create the bindings manually ? Or let S2D re-consider the imbalance ?

When adding 6 more hosts to an existing cluster of 6, two hosts failed to end up with the right cache binding.

We have 5 SSD’s and 15 HDD’s in each node. So a 1:3 ratio. This works for all nodes, but two.

I get these warnings on 2 hosts.

WARNING: Not all cache devices in use

WARNING: Binding ratios are uneven

On these 2 nodes, only 1 or 2 cache disks are bound to the capacity disks. Even though all the SSD’s are in the pool and set to Journal usage.

Will Repair-ClusterStorageSpacesDirect do the job ?

Greetings

Hi Richard,

First let me ask if all your drives have the status “InitialisedAndBound”. If not you can try to run;

Repair-ClusterS2D -RecoverUnboundDrives -Node “NODE1”

Otherwise you can remove the SDDs from the pool and re-add them.

See this blog how to do that: https://jtpedersen.com/2017/11/how-to-rebind-mirror-or-performance-drives-back-to-s2d-cache-device/

Hi,

What if that even does not help? Or it worked for 2 out of 3 drives but the third one does not want to be bound? Any clue about that?

You can try to run;

Repair-ClusterS2D -RecoverUnboundDrives -Node “NODE1”

Otherwise you can remove the SDDs from the pool and re-add them.

See this blog how to do that: https://jtpedersen.com/2017/11/how-to-rebind-mirror-or-performance-drives-back-to-s2d-cache-device/

Hi Darryl,

I ran your script. Very handy thank you.

I only get 20Mb/s write times on my s2d cluster (2node)

Can you help me with interpretation of the output.

Is caching actually working because I cant see any data whatsoever on my SSD’s?

PS C:\WINDOWS\system32> C:\software\s2d\Get-CacheDiskStatus\Get-CacheDiskStatus.ps1

cmdlet Get-CacheDiskStatus.ps1 at command pipeline position 1

Supply values for the following parameters:

ClusterName: ipdscluster

—————————————-

Node: SVR1

DiskState DiskId DeviceNumber CacheDeviceNumber HasSeekPenalty PathId BindingAttributes DirtyPages

——— —— ———— —————– ————– —— —————– ———-

InitializedAndBound {7b5c16c4-652d-3456-556f-e52fe448304d} 3 7 true 19 1 6185348

InitializedAndBound {636f70d2-428e-9b50-a957-630c74751c1a} 4 8 true 15 1 6910859

InitializedAndBound {f94b64d0-c961-d615-5fe9-f6bfa236dba5} 2 5 true 17 1 5970198

InitializedAndBound {a1401063-fa23-d883-ba5b-852e6c10b950} 1 6 true 21 1 2038561

InitializedAndBound {450bbed0-ee38-7533-2143-685469340815} 8 = cache false 13 0 0

InitializedAndBound {c5966ba4-311e-3c14-ed43-433cded6b09b} 6 = cache false 9 0 0

InitializedAndBound {18130da3-83e2-ef01-6017-8edd6b050036} 7 = cache false 11 0 0

InitializedAndBound {796bf77d-b395-87a8-66e4-cba59819b28b} 5 = cache false 7 0 0

Device counts: cache 4 capacity 4

Binding ratio is even: 1:1

All disks are in InitializedAndBound

—————————————-

Node: SVR2

DiskState DiskId DeviceNumber CacheDeviceNumber HasSeekPenalty PathId BindingAttributes DirtyPages

——— —— ———— —————– ————– —— —————– ———-

InitializedAndBound {eef9fb90-9ca3-67a3-ed29-ff4a97c2278d} 2 7 true 15 1 60

InitializedAndBound {0d342a92-ea9c-61f9-eea7-fabc6037b935} 3 6 true 17 1 74

InitializedAndBound {415ea052-ccfb-e04d-db54-77f178568b56} 1 8 true 21 1 6

InitializedAndBound {bfaffddc-e5d6-83d1-625a-e165ef97dcb4} 4 5 true 19 1 6

InitializedAndBound {e2300fef-b71b-8513-594e-8d139775bf51} 5 = cache false 7 0 0

InitializedAndBound {b4c49cb0-4f1d-b73b-a20c-c32945e3c386} 8 = cache false 13 0 0

InitializedAndBound {48b3c541-6f8e-864d-88de-af3f4ed63f04} 6 = cache false 9 0 0

InitializedAndBound {00663d2f-abd6-0ffc-0d28-1b3d49773754} 7 = cache false 11 0 0

Device counts: cache 4 capacity 4

Binding ratio is even: 1:1

All disks are in InitializedAndBound

.

Hi Olaf,

According to the output all cache devices are bound to a capacity device and it should be working.

But I also see you have no Dirty pages. Try to get more insights in Windows Admin Center on the usage of your SSDs. Is there any IO happening?

Hi Darryl, Thanks for the response.

Seems the SSD’s x 8 are doing just about nothing. They show a bit of write action from time to time but they are all empty. Is there a way to set up a performance volume just using all the SSD’s to give a better performance? As opposed them almost being wasted now.

Thanks again for your time and knowledge. Much appreciated.

Olaf

What happens if we want to remove cache from a running production cluster? This would be a 3way mirror with one cache disk failed.

I was thinking about “Set-ClusterS2D -CacheState Disabled”

Hi Brad,

That command will disable the caching completely.

If a cache device has failed you can retire it and then you can replace it.

Hello Darryl

Thankyou for the script. it helped when I had a failed cache and all the other disk bound to that cache did get IO error. I saw that the capacity drives did not fail over to the second cache therefore the IO error

I have replaced the failed cache and replaced some capacity drives due to that. The disks that no longer is present on the server shows as unbound and also one is connected to the old dead cache.. The disk dont show up when i run get-physicaldisk

Is this normal behaivour and will be cleared automaticly?

DiskState DiskId DeviceNumber CacheDeviceNumber

——— —— ———— —————–

Missing {b2a1623e-376c-1fd6-2751-d950add329f2} 10 = unbound

Missing {89f5434e-3490-fc23-3427-8c74d03b90c3} 8 = unbound

Reset {7df9036e-76c4-fd90-4db2-9da9b47faa2e} 10 = unbound

InitializedAndBound {3ffed778-04d5-2e5a-5d43-ed5a6bc41eed} 5 9

InitializedAndBound {c038c69d-113c-a63c-9ea2-ecf7253678e7} 2 9

InitializedAndBound {5624cd6a-08d6-8702-bccd-b9eb7125c36e} 10 9

InitializedAndBound {baa37027-7253-2fcf-61af-b46a2f6a9555} 0 9

Missing {c32dc5c8-e25c-af36-cb6f-3e48b00b7d71} 1 8

InitializedAndBound {25bf3eb3-ef67-95f3-0fbd-51e11a21bd97} 3 1

InitializedAndBound {bcc2b018-3e9c-2c17-c0df-42525539d98b} 4 1

InitializedAndBound {ca25777e-90a4-3604-948b-75532526a142} 6 1

InitializedAndBound {970c5e75-9b23-4f8e-2448-c98c2dbe751a} 1 = cache

InitializedAndBound {62664c83-749a-26ca-de1c-772e44a1552f} 9 = cache