In this multi-part blog series I’m going to try to clear some fog around the networking parts needed in Windows Server 2016, especially when using Storage Spaces (Direct).

I’ll be touching multiple areas, things that were new to me (I’m not a network guy) such as DCB and and PFC, but also recommendations on the known RSS , Jumbo frames, VMQ and VMMQ.

Sometimes I refer to an external source because they explain it much better than I can 😉 The goal is to have you understand the whole spectrum of things needed when your done with the last part of this series.

Part 1: RDMA, DCB, PFC, ETS, etc

Part 2: Configuring DCB in Windows

Part 3: Optimizing Network settings

Part 4: Test DCB and RDMA (coming)

Hyper-converged infrastructures are forcing us to change the way we configure networking, especially with technologies such as RDMA (Remote Direct Memory Access) coming in to play which allows us to enhance network speeds drastically. Windows Server 2016 supports all features needed to manage your network and traffic flows to ensure your systems handle traffic efficiently.

Remote Direct Memory Access & SMB Direct

RDMA is a networking technology that provides high-throughput, low-latency communication that minimizes CPU usage. Windows Server currently supports the following RDMA technologies:

- Infiniband (IB)

- Internet Wide Area RDMA Protocol (iWARP)

- RDMA over Converged Ethernet (RoCE)

RDMA is optimizing the network by placing data directly into the host destination memory thus bypassing the memory and CPU bus. This way the overhead for network traffic is minimal and helps a lot latency wise. With the constant evolving network hardware, which makes higher speeds possible, we want to skip the CPU and memory in the server because it could eventually cause scalability issues. In other words, the CPU and memory cannot keep up with the network capabilities.

SMB Direct is an extension of the SMB technology by Microsoft used for file operations. The Direct part implies the use of various high speed Remote Direct Memory Access (RDMA) methods to transfer large amounts of data with little CPU intervention. In Windows Server 2012 and later, the Network Direct Kernel Provider Interface (NDKPI) enables the SMB server and client to use remote direct memory access (RDMA) functionality that is provided by the hardware vendors.

I highly recommend RDMA capable NICs when doing Storage Spaces Direct clusters.

Data-Center Bridging

Data-Center Bridging (DCB) is an extension to the Ethernet protocol that makes dedicated traffic flows possible in a converged network scenario. DCB distinguishes traffic flows by tagging the traffic with a specific value (0-7) called a “CoS” value which stands for Class of Service. CoS values can also be referred to as “Priority” or “Tag”. Note that every node in the network (switches and servers) needs to have DCB enabled and configured consistently for DCB to work.

Enabling DCB also brings support for lossless transmission of network packets, with latency sensitive network traffic such as Storage Spaces Direct or Storage Replica traffic over the SMB protocol we would like to have a lossless network; making sure that every packet that has been send actually reaches “the other side”. Normally, lost data packets are not a big issue as they would get retransmitted by TCP/IP but with storage traffic, lost data packets are killing for your performance as they introduce I/O delays which is unacceptable. DCB uses the CoS values to specify which value needs to be lossless transmitted through the network, typically network switches support up to three lossless enabled CoS values.

Priority-based Flow Control

In standard Ethernet we have the ability to pause traffic when the receive buffers are getting full. The downside of ethernet pause is that it will pause all traffic on the link. As the name already gives it away, Priority-based Flow Control (PFC) can pause the traffic per flow based on that specific Priority, in other words; PFC creates Pause Frames based on a traffic CoS value. This way we can manage flow control selectively for the traffic that requires it, such as storage traffic, without impacting other traffic on the link.

In the following example we’re using 3 CoS values, as you can see only the storage traffic is paused when the buffers are getting full while cluster traffic just keeps going.

This makes sure the server is not unreachable for the other servers in the cluster which would cause a failover of all the cluster roles.

The receive buffers could get full when I/O cannot be done (fast enough) to disks for example.

Enhanced Transmission Selection

With DCB in-place the traffic flows are nicely separated from each other and can pause independently because of PFC but, PFC does not provide any Quality-of-Service (QoS). If your servers are able to fully utilize the full network pipe with only storage traffic, other traffic such as cluster heartbeat or tenant traffic may come in jeopardy.

The purpose of Enhanced Transmission Selection (ETS) is to allocate bandwidth based on the different priority settings of the traffic flows, this way the network components share the same physical pipe but ETS makes sure that everyone gets the share of the pipe specified and prevent the “noisy neighbor” effect.

Note that ETS only makes sure that sufficient bandwidth is guaranteed on egress traffic.

Data Center Bridging Exchange Protocol

This protocol is better known as DCBX as in also an extension on the DCB protocol, where the “X” stands for eXchange.

DCBX can be used to share information about the DCB settings between peers (switches and servers) to ensure you have a consistent configuration on your network.

In other words, you configure DCB on the switch, enable DCBX on the switches and servers and the servers will receive the DCB information to configure the network cards.

Microsoft recommends to disable DCBX, this probably has to do with vendors implementing it differently and issues with drivers etc etc.

Configuring Windows to use DCB / PFC / ETS

Now that we got the formalities on terminology out of the way, I can finally start with showing how this “network stuff” works in combination with Windows Server.

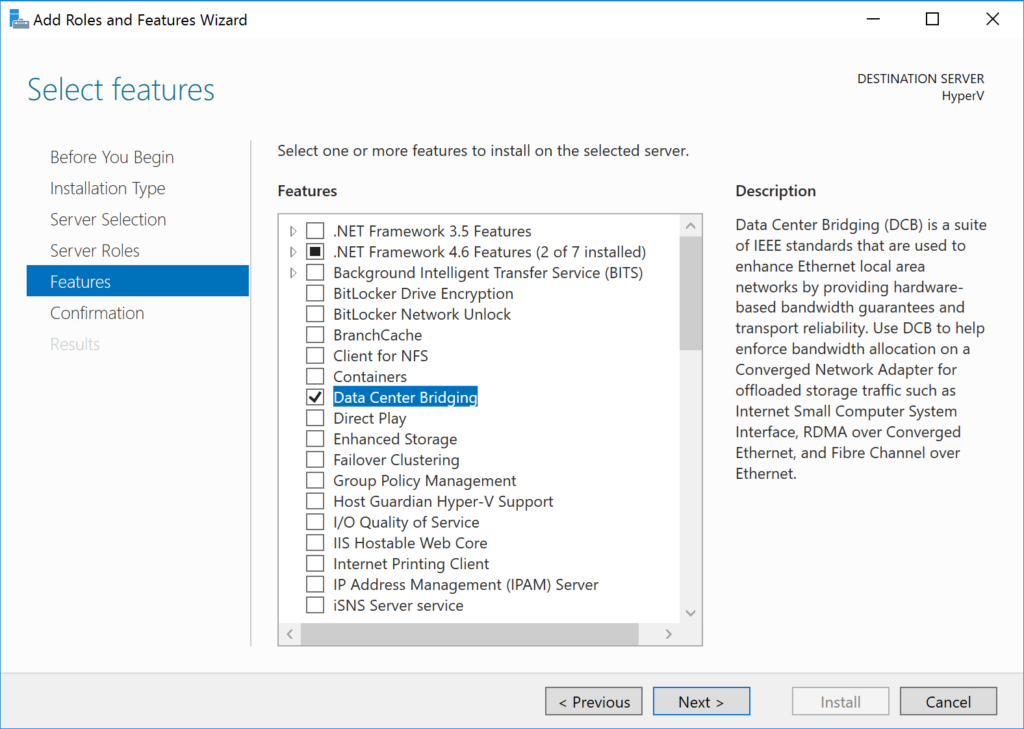

DCB is already included out-of-the-box in Windows Server, but is not installed by default. The only thing we need to do is to enable the feature using Server Manager or our beloved Powershell:

Installing the DCB feature gets us some new Powershell cmdlets to work with, which we will touch in the next part where we will configure DCB on the Windows side.

Continue to Part 2: Configuring DCB in Windows >>

—

Thank you for reading my blog.

If you have any questions or feedback, leave a comment or drop me an email.

Darryl van der Peijl

http://www.twitter.com/DarrylvdPeijl

Hi,

This is an excellent article. Bandwidth allocation using VM-QOS ETS, what is the difference between the following powershell commands?

New-NetQosTrafficClass “SMB” -Priority 3 -BandwidthPercentage 50 -Algorithm ETS

(AND) the following

Set-VMNetworkAdapter -Name SMB1 -ManagementOS -ieeePriorityTag On

Set-VMNetworkAdapter -Name SMB2 -ManagementOS -ieeePriorityTag On

et-VMNetworkAdapter -Name SMB1 -ManagementOS -MinimumBandwidthWeight 50

Set-VMNetworkAdapter -Name SMB2 -ManagementOS -MinimumBandwidthWeight 50

Allocating 50% for SMB1 and SMB2 each would amount to 100% or 50% in total for SMB traffic?

Does this mean in SCVMM2016, the wizard bandwidth allocation is based on VM-QOS? If so, Configuring these values in SCVMM, would be enough or do we have to configure these values both in SCVMM and Hyper-V hosts using powershell?

Also, do we have configure bandwidth allocation on Switches as well? or just enabling PFC,DCB and configuring PFC for SMB would be enough? We are using Mellanox switches, Can you direct me to what needs configuring on a mellanox switch based on the following

####### This is an Example Script#######

#####Cleanup DCB configuration (if any)

Get-NetQosPolicy | Remove-NetQosPolicy -Confirm:$False

Disable-NetQosFlowControl -Priority 0,1,2,3,4,5,6,7

Get-NetQosTrafficClass | Remove-NetQosTrafficClass -Confirm:$False

###### Disable DCBx

Set-NetQosDcbxSetting –Willing $false -Confirm:$False

##### Set tagging policies

New-NetQosPolicy “SMB” -NetDirectPortMatchCondition 445 -PriorityValue8021Action 3

New-NetQosPolicy -name “Live Migration” -IPDstPort 6600 -PriorityValue8021Action 2

New-NetQosPolicy “Cluster”-IPDstPort 3343 –PriorityValue8021Action 5

#### Turn on Flow Control (Lossless) for SMB

Enable-NetQosFlowControl -Priority 3

#### Make sure flow control is off for other traffic

Disable-NetQosFlowControl -Priority 0,1,2,4,5,6,7

###### Enable QoS on Adapters

Get-NetAdapterQos | Enable-NetAdapterQos

##### Configure ETS with Bandwidth minimums, default class will take 30%

New-NetQosTrafficClass “SMB” -Priority 3 -BandwidthPercentage 50 -Algorithm ETS

New-NetQosTrafficClass “Live Migration” -Priority 2 -BandwidthPercentage 20 -Algorithm ETS

New-NetQosTrafficClass “Cluster” -Priority 5 -BandwidthPercentage 5 -Algorithm ETS

#####ETS can be set at SDN-QOS and VM-QOS

SDN-QOS is set at hardware level

VM-QOS is set at software level and it is set using powershell

Set-VMNetworkAdapter -Name SMB1 -ManagementOS -ieeePriorityTag On

Set-VMNetworkAdapter -Name SMB2 -ManagementOS -ieeePriorityTag On

Set-VMNetworkAdapter -Name “Live Migration” -ManagementOS -ieeePriorityTag On

Set-VMNetworkAdapter -Name “Cluster” -ManagementOS -ieeePriorityTag On

Set-VMNetworkAdapter -Name SMB1 -ManagementOS -MinimumBandwidthWeight 50

Set-VMNetworkAdapter -Name SMB2 -ManagementOS -MinimumBandwidthWeight 50

Set-VMNetworkAdapter -ManagementOS -Name “LiveMigration” -MinimumBandwidthWeight 20

Set-VMNetworkAdapter -ManagementOS -Name “LiveMigration” -MinimumBandwidthWeight 5

I really appreciate you help.

> what is the difference between the following powershell commands

New-NetQosTrafficClass = Hardware QoS

Set-VMNetworkAdapter = VM QoS

>Allocating 50% for SMB1 and SMB2 each would amount to 100% or 50% in total for SMB traffic?

It is based on NIC level

> Does this mean in SCVMM2016, the wizard bandwidth allocation is based on VM-QOS?

Yes

>Configuring these values in SCVMM, would be enough or do we have to configure these values both in SCVMM and Hyper-V hosts using powershell?

VMM does not configure ETS and PFC (Hardware QoS)

> Also, do we have configure bandwidth allocation on Switches as well? or just enabling PFC,DCB and configuring PFC for SMB would be enough?

Yes the switch also needs to know which packets with which priorities are important, see https://community.mellanox.com/docs/DOC-2778

Thank you for your reply. I have already looked at that article. It seems that the switch configuration and windows configuration only covers SMB traffic with PFC 3 and DCB ETS with 50%, but i don’t see 50% allocation in Switch configuration, does this mean setting ETS in windnows with powershell command is enough? Allocation I think enabling DCB and PFC in the switch configuration as outlined in the mellanox switch configuration automatically allocates buffer size and PFC. Does this also enable ECN or do we have configure this both on Windows and in the switch? If so how? Also, If want to enable PFC for Livemigration traffic and wants to allocate 30% bandwidth, what additional configuration do i need to add into windows and on the Switch. I would appreciate your help.

Remember that CoS is a priority flag that sits on layer 2, and are ONLY available when VLAN taging is turned on. So if you are running a server that you want to add DCB capabilities to, you will also want to make sure you enable VLAN tagging on both that server and the switch it is connected to.

I’m curious as to why DCB didn’t have an option to use DSCP (layer 3), which has 64 values and doesn’t require VLAN tagging.